What Vibe Coding Got Right

To be fair: vibe coding delivered something real.

For the first time, a person with a clear idea and no engineering background could turn that idea into running code within hours. Barriers that had existed for decades — syntax, tooling, environment setup, the sheer impenetrability of a blank IDE — dissolved. The time from concept to first-working-thing collapsed in ways that would have seemed implausible five years ago.

That is not nothing. That is, in fact, a genuine revolution in access.

Vibe coding also shook the software development community in a productive way. It challenged assumptions about what required human expertise, forced a reckoning with what engineers actually add beyond code generation, and made AI-assisted development a mainstream conversation rather than a niche experiment.

“The time from concept to first-working-thing collapsed in ways that would have seemed implausible five years ago. That is a genuine revolution in access.”

But revolutions in access are not the same as revolutions in outcomes. And the outcomes — for real products, serving real users, under real-world conditions — are where the story gets complicated.

Before going further, let me address something directly. If you have followed this work for a while, you may be raising an eyebrow right now.

“A few months ago you were singing vibe coding’s praises like it was the best thing since sliced bread. Now you’re telling me we’re past it?”

Fair. And the honest answer is: yes. Both things are true.

A few months ago, vibe coding was the right frame. The tools had just crossed a threshold. The acceleration was real and needed to be named. If you were an experienced engineer who had not yet tried these tools seriously, the message you needed to hear was: stop hesitating, get in the room, the productivity gains are genuine. And they were. That message was correct for that moment.

What has changed is not the tools — it is the context around them. The tools kept improving, faster than most predicted. The use cases expanded from individual engineers into teams, into organizations, into the hands of people with no engineering background at all. And as the scope expanded, the gaps between “vibe-coded prototype” and “production-ready system” became more consequential and more visible.

This is not a contradiction. It is how process improvement actually works. Think of any transformative methodology in software delivery — Agile, DevOps, cloud-native. Each one arrived as a provocation: stop doing the old thing, try this new approach, the gains are real. And each one, as it matured, developed a fuller body of practice — not because the original insight was wrong, but because the original insight opened a door that led to a larger room.

Vibe coding opened the door. AIASE is the larger room. The principles that made vibe coding exciting are still intact: AI as collaborator, conversation as the unit of development, dramatic compression of time from idea to working code. What expands is the execution — the recognition that the conversation must cover the full surface of software engineering, not just the parts that are visible in a demo.

Changing your mind when the evidence changes is not inconsistency. In a field moving this fast, it is the only rational response.

The Ceiling Nobody Talks About

Here is what nobody tells you in the vibe coding demos: the demo is the easy part.

Getting a UI to render, a database to accept writes, an API to return a 200 — these are achievements. They are also the surface of the iceberg. Below the waterline sits everything that determines whether software actually survives contact with the real world.

Software engineering is not a one-shot deal. It is an extended, disciplined conversation — conducted across time, across specialties, and across a set of concerns that do not resolve themselves in a single chat session. Those concerns existed before AI. They exist now. The tools have changed. The surface has not.

The citizen developer — the genuinely non-technical person who can build production-quality software with an AI team and no engineering background — is a compelling and achievable vision. It is also a few years away from being real. Today, without sufficient technical foundation, the vibe coding ceiling appears faster than most people expect.

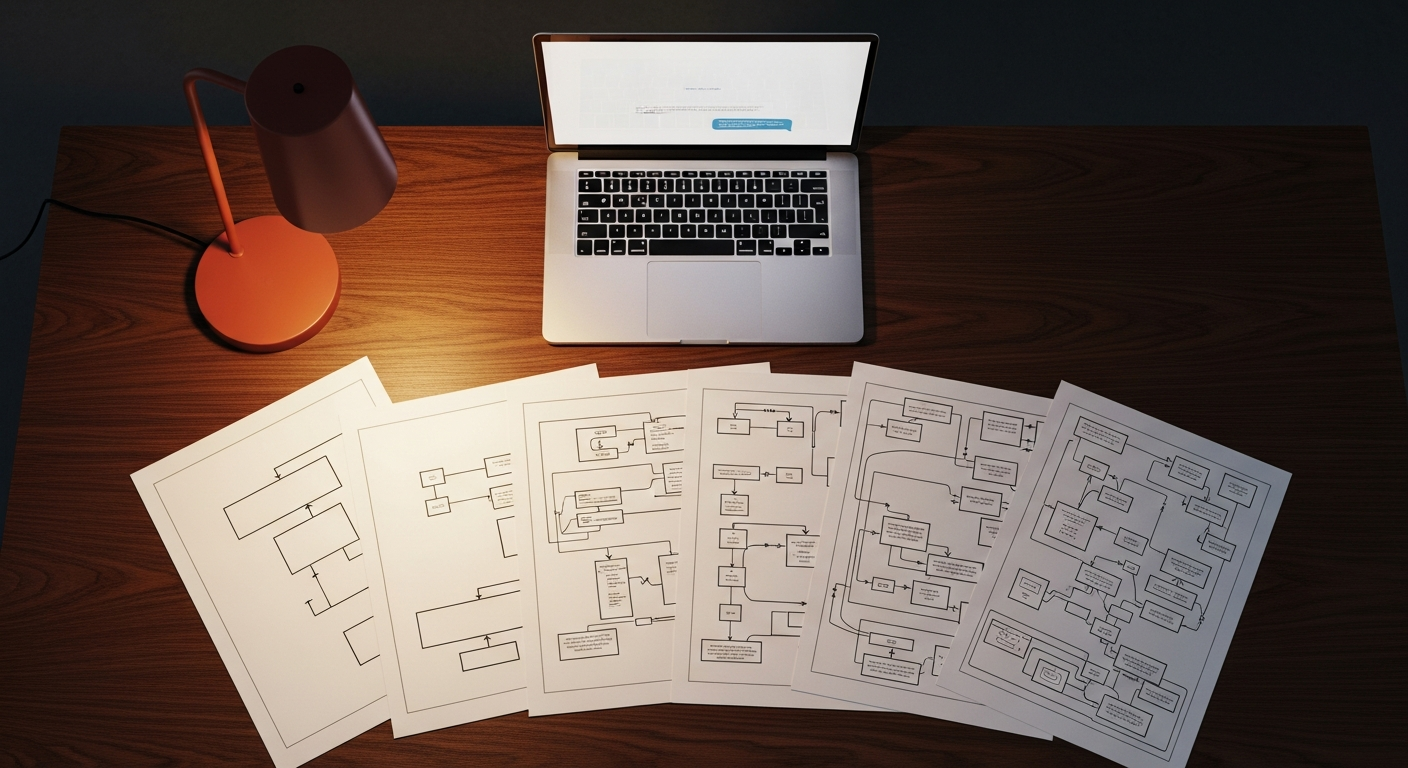

What does that ceiling look like in practice? It looks like six disciplines, each of which demands its own sustained conversation.

The Six Disciplines That Cannot Be Prompted Away

Each discipline requires a deliberate, structured conversation with your AI team — and enough technical foundation to ask the right questions, challenge the generated outputs, and recognize when something has been missed.

-

01Requirements: Functional and Non-Functional

Functional requirements — what the system should do — are the part people remember to discuss. Non-functional requirements (NFRs) — how the system should perform, scale, fail gracefully, protect data, and comply with regulations — rarely make it into the initial prompt. Yet NFRs are frequently where products live or die. Latency thresholds. Throughput under load. Availability commitments. Privacy regulations that differ by geography. These are not edge cases. They are the foundation.

-

02Business Architecture: Governance, Compliance, and IP

Technology does not exist in a legal or organizational vacuum. What is the operational model — who operates this system, in what jurisdiction, under what contractual obligations? Is this product subject to HIPAA, SOC 2, PCI-DSS, GDPR? Who owns the intellectual property generated by the system, and by the AI that helped build it? A citizen developer working from instinct will often make implicit assumptions that carry significant legal exposure. These questions must be mapped before architecture decisions are made.

-

03Technical Architecture

This is the domain where the gap between a productive weekend prototype and a scalable production system is most visible. Hosting architecture, component architecture, API and SaaS integration design, observability and monitoring, data architecture — each requires dedicated conversation, not a follow-on task. Architectural decisions are expensive to reverse. The cost of revisiting a monolith/microservices decision six months into development is orders of magnitude higher than making the right decision at the outset.

-

04Identity, Access Management, and Cybersecurity

Security is not a feature. It is a discipline, woven into the architecture from the first conversation — not retrofitted after the first breach report. IAM, input validation, injection prevention, secrets management, encryption in transit and at rest, least-privilege access — these require systematic coverage informed by frameworks like OWASP and NIST. A vibe-coded prototype that never saw a security review is not a product. It is a liability waiting to be discovered.

-

05DevOps: Operationalize, Test, and Layer in Safety

Getting software into production once is not the same as operating it reliably over time. CI/CD pipelines, infrastructure-as-code, deployment strategies that minimize blast radius, incident response runbooks — these are the engineering discipline that keeps production safe across every release. For AI-native systems specifically, the challenges compound: model outputs are non-deterministic, behavior can drift as underlying models are updated, and human oversight mechanisms must be designed in from the start.

-

06AI Integration, Cost Optimization, and GovOps

For systems that integrate AI at runtime — an increasing share of modern software — a sixth discipline applies. LLM selection and cost optimization, prompt engineering and context management, AI guardrails and content safety, AI governance and observability. AI GovOps — the governance of AI in production — is an emerging discipline with already-clear questions: How do you audit AI decisions? How do you detect and respond to model drift? How do you ensure compliance with evolving AI regulations?

What AIASE Actually Looks Like

AI-Assisted Software Engineering is not the absence of engineering discipline. It is that same discipline, amplified.

The practitioner who succeeds with AIASE brings traditional technical experience — hard-won knowledge of what makes systems fail, what makes them scale, what security mistakes look like in code, what a well-structured data model looks like versus a fragile one. They bring theoretical understanding: architecture patterns, engineering principles, security frameworks, DevOps practice. And they bring the judgment to direct their AI team effectively: asking the right questions, challenging the generated outputs, catching the edge cases the model didn’t consider.

The AI amplifies all of this. It removes the mechanical friction — the boilerplate, the scaffolding, the documentation burden, the configuration overhead. It allows a single experienced practitioner to cover ground that previously required a full team.

But the keyword is practitioners. The amplifier multiplies what you bring to it. If you bring technical depth, AI amplifies your capability dramatically. If you bring a prompt and a wish, AI returns a prototype — one that will look convincing and behave unexpectedly in production.

AI-Assisted Software Engineering does not boil down to writing a short prompt in your favorite coding CLI.

We are past Vibe Coding.The question now is whether you have the foundation to use the amplifier well — and the discipline to conduct the full conversation that production-quality software demands.

The Citizen Developer Vision: Real, But Not Yet

The vision of the true citizen developer — a motivated, non-technical person who can build production-quality software through a sustained, intelligent conversation with an AI team — is not fantasy. It is where this technology is heading.

The trajectory is clear. Each generation of AI tools reduces the technical overhead required to produce reliable software. Architectural guardrails are being embedded into development environments. Security analysis is becoming increasingly automated and proactive. Observability tooling is growing more self-managing.

There is a credible path from here to a world where a domain expert — a clinician, a logistics manager, an educator — can build the software their domain requires without a software engineering background, and where the AI team handles the full depth of the six disciplines described above.

We are a few years from that world. The tools are not yet there. More importantly, the AI judgment required to navigate the failure modes of these disciplines — without human oversight from someone who knows what the failure modes look like — is not yet there.

Until it is, the most dangerous position an organization can take is assuming the distance has already been closed.

Don’t Be Fooled

The promise of vibe coding was real. The ceiling is real. The path forward — through AI-Assisted Software Engineering, with all of its disciplines intact — is also real.

The organizations that will build competitive advantage in the AI era are not the ones that discovered the short prompt. They are the ones that understood what the short prompt can and cannot do, built the practitioner capability to use AI tools at their full depth, and invested in the engineering discipline required to turn AI-generated code into reliable, secure, compliant, operable products.

That is the work. It is harder than a demo. It is also more durable than a prototype.

The question worth asking — honestly, in your next architectural review or technology strategy discussion — is whether your organization is building for the demo or building for the long run.